Keep Model Performance reliable over time

Model performance can change over time as data and conditions evolve. Monitoring during development or validation alone is not sufficient.

Yields supports ongoing model performance within one Model Risk Management software platform.

The model performance challenge in real use

After deployment, model performance is hard to control.

This makes performance issues easy to miss.

How model performance works with Yields

Yields provides Model Risk Management software that helps teams take control of performance monitoring.

Configure data and tests

Define performance data and select standard or custom tests for each model.

Run tests

Execute performance tests in a consistent and repeatable way.

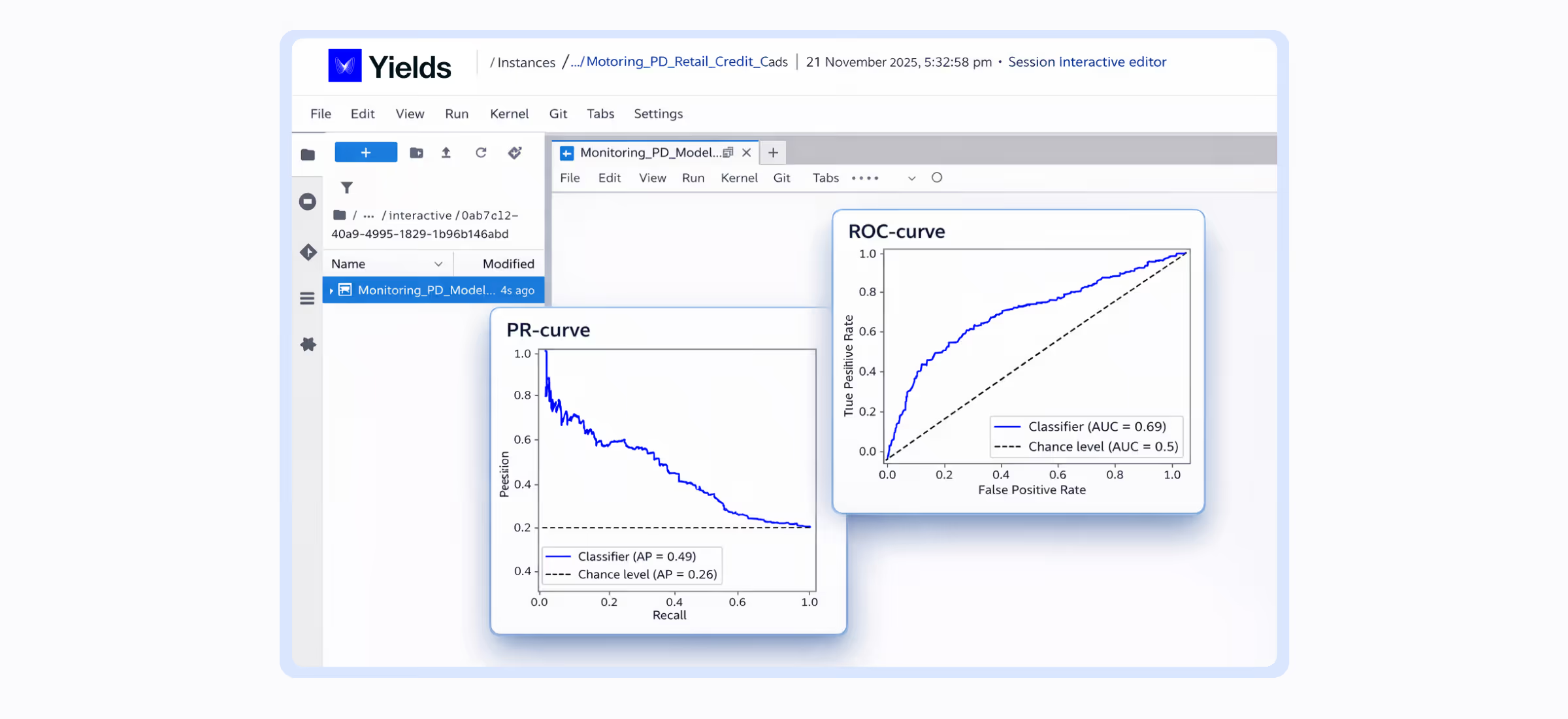

Deep dive analysis

Review results to understand performance changes, drift or deterioration.

Monitor at scale

Track model performance across portfolios and over time.

Who benefits from model performance?

Model developers

Model developers rely on continuous monitoring to ensure their models maintain their predictive power and deliver reliable results in production.

Model validators

Model validators need robust, transparent data on model behavior to assess risks and confirm regulatory compliance before and after deployment.

Model performance in the age of AI

AI and machine learning driven models often change behaviour more quickly than traditional models. Without proactive performance tracking, this drift can go unnoticed until it causes issues in business decisions.

Yields applies the same performance framework to both traditional and AI influenced models, ensuring consistent oversight even as model complexity evolves.

Ready to maintain reliable model performance?

See how Yields helps teams keep model performance stable as part of its Model Risk Management software.

FAQ

Model performance refers to how reliable a model remains over time. Continuous monitoring is crucial because model performance can change as data, behavior, and underlying conditions evolve after deployment. Monitoring during development or validation alone is not sufficient to maintain oversight.

The main challenges teams face in controlling model performance after deployment include:

- Limited visibility into real behavior

- Metrics scattered across various tools

- Lack of clear thresholds for action

- Faster change and drift in AI and machine learning models

- Difficulty in attributing performance changes to specific factors

Yields' software helps teams take control of performance monitoring through a structured process:

Configure data and tests: Define performance data and select standard or custom tests for each model.

Run tests: Execute performance tests in a consistent and repeatable way.

Deep dive analysis: Review results to understand performance changes, drift, or deterioration.

Monitor at scale: Track model performance across portfolios and over time.

AI and machine learning-driven models can change behavior more quickly than traditional models. Yields addresses this by applying the same consistent performance framework to both traditional and AI-influenced models, ensuring proactive tracking and oversight even as model complexity evolves.

Model developers: Gain confidence that their models continue to perform as expected after deployment.

Model validators: Receive clear performance evidence to support independent review, decision-making, and regulatory compliance.