The Three Lines Of Defence In Model Risk Management

The Three Lines of defence Model is a widely adopted governance framework that financial institutions, particularly banks and other regulated firms, apply to deliver effective model risk management (MRM).

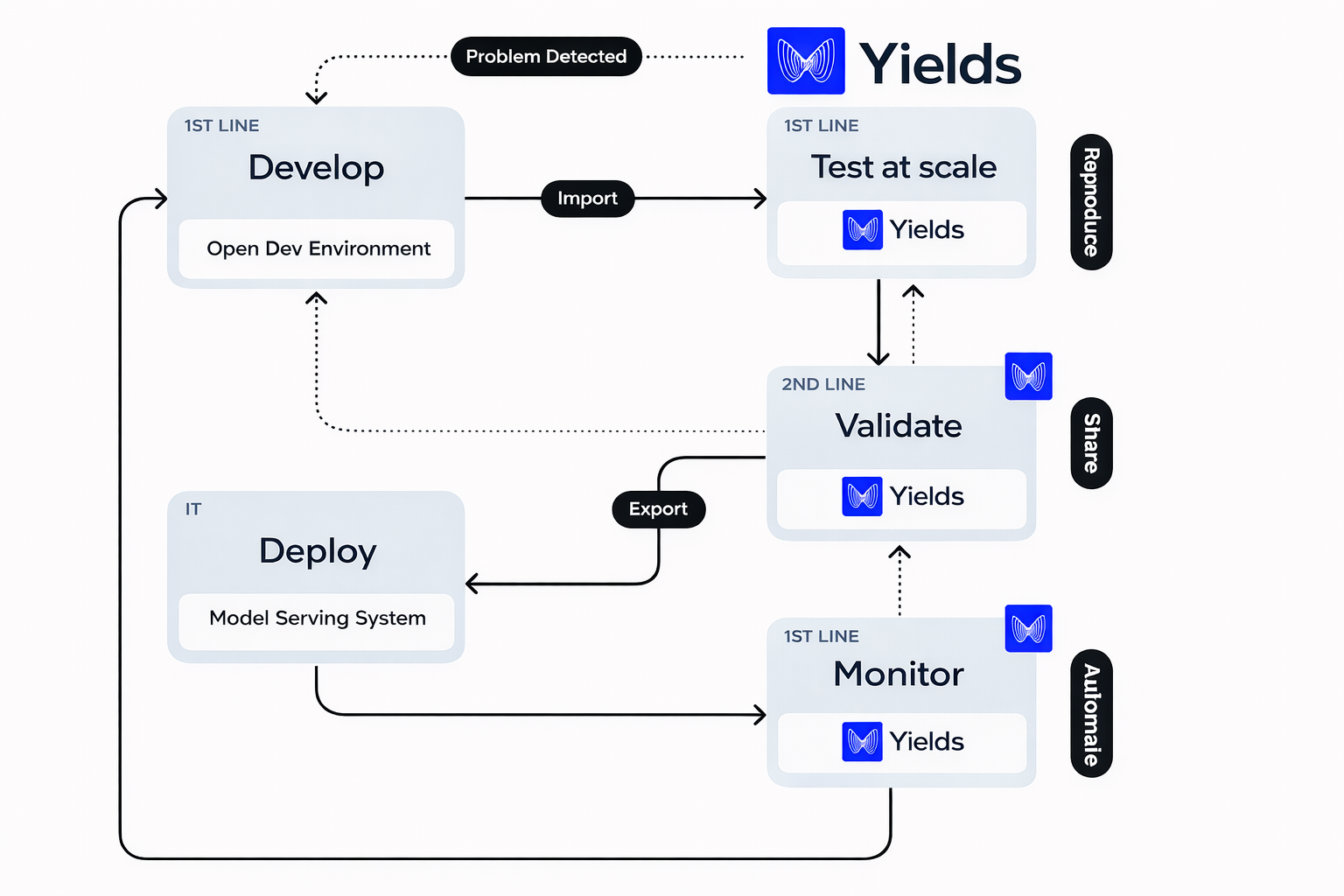

Before elaborating further on the three lines of defence, it is important to understand that model risk management is not a sequential or purely linear process. The process, which starts from the ideation and development of a model and extends throughout its operational lifetime, requires continuous interaction and feedback loops between different stakeholders. These stakeholders collectively make up the so-called three lines.

Through multiple iterations between the three lines of defence, an organization can assure the quality, robustness, and continued relevance of models in production. Moreover, this interaction reduces the risk of model failure and supports timely remediation when model performance deteriorates.

By clarifying roles and responsibilities, the Three Lines of defence Model enhances governance, accountability, and risk management. Information is centrally managed and shared, and activities are coordinated to avoid duplication of effort and gaps in coverage.

The original driver for this concept in model risk management remains regulatory guidance such as SR 11-7, which states:

“While there are several ways in which banks can assign the responsibilities associated with the roles in model risk management, it is important that reporting lines and incentives be clear, with potential conflicts of interest identified and addressed.”

Although SR 11-7 explicitly refers to banks, its principles have been broadly adopted across other financial institutions. Since 2021, these principles have been supplemented by additional supervisory expectations, including ECB and EBA guidance, UK PRA supervisory statements, and increasing regulatory focus on machine learning (ML), artificial intelligence (AI), data governance, and model explainability. Institutions therefore typically treat SR 11-7 as a baseline rather than a complete standard.

Some institutions may not implement all three lines of defence in a fully separate manner. For example, internal model audit capabilities may be limited or partially outsourced, and certain validation or governance activities may be centralized at group level.

Organization of the three lines of defence

The first line consists of model developers, model owners, model users, and roles responsible for the design and maintenance of the model landscape (often embedded within enterprise architecture, MLOps, or platform teams).

The second line comprises model validation and model governance functions, operating independently from model development.

The third line is formed by internal audit.

To understand the interaction between these lines, it is helpful to consider a typical model lifecycle. While institutions may differ in implementation, a representative lifecycle includes:

- Model proposal and use-case definition

- Model development and testing

- Pre-implementation review

- Independent validation and challenge

- Approval and controlled deployment

- Ongoing monitoring and periodic review

- Model change, redevelopment, or retirement

This lifecycle should be understood as continuous, particularly for ML and data-driven models where monitoring, retraining, and redeployment are integral parts of the process.

The First Line of Defence

Model developers, model owners, model users, and model landscape or platform stakeholders form the first line.

Model owners propose model ideas based on business requirements. Each model is identified, classified, and tiered according to its materiality, complexity, and potential impact.

Model developers are responsible for building models and ensuring they are thoroughly tested prior to deployment. This includes theoretical soundness, data preparation, numerical implementation, and robustness testing.

Model users provide feedback on model usage and outcomes. Persistent overrides or workarounds are key indicators that a model may no longer be fit for purpose or that its limitations are not adequately addressed.

Responsibilities related to the model landscape have evolved since 2021. Rather than a single, standalone “model landscape architect” role, many institutions now distribute these responsibilities across enterprise architecture, IT, data, and MLOps functions, ensuring consistency, reuse, and maintainability across the model estate. The first line is responsible for producing comprehensive model documentation. This includes:

- Scope and intended use

- Theoretical foundations and assumptions

- Data sources, lineage, and quality controls

- Methodology, parameters, and limitations

- Implementation details and testing results

For example, for a Black–Scholes model used for pricing and risk management of equity options at expiry, documentation would specify applicable settlement delays, maturity limits, and known model limitations.

The first line must ensure that the underlying theory is valid, data is appropriate and well understood, and implementation is consistent with the documented methodology. Once implementation is complete, the model is described through multiple documents (e.g. specification, implementation notes, and user guidance), which serve as input for independent validation.

Model maintenance remains primarily a first-line responsibility. Model monitoring, including performance metrics, stability checks, drift detection, and override analysis is typically executed by the first line, with independent challenge and review by the second line, particularly for higher-tier or ML-based models.

It is good practice to separate model execution environments from monitoring and control infrastructure to support independence and transparency.

How can technology support the first line of defence efficiency?

Technology increasingly plays a key role in improving first-line efficiency and control quality.

Modern Model Risk Management platforms such as Yields support the automation of repetitive and resource-intensive analyses, including backtesting, benchmarking, sensitivity analysis, and data quality checks. Automation allows model risk professionals to focus on expert judgment, interpretation, and remediation rather than manual execution.

Benchmarking models (e.g. extensions of Black–Scholes incorporating stochastic interest rates) and systematic data quality assessments can be executed in a reproducible manner. In addition, ML techniques including AutoML for challenger models and anomaly detection for data and output monitoring are increasingly used, subject to appropriate governance.

Through Yields, institutions can generate reproducible testing artefacts, maintain a clear audit trail, and monitor model quality over time. Integration with analytical tooling enables interactive documentation while preserving control and traceability.

By strategically owning the validation and monitoring processes, institutions can ensure alignment with regulatory expectations such as SR 11-7, ECB and PRA guidance, and emerging AI governance frameworks.

Second Line of Defence

Model validation and model governance together form the second line and typically report to the head of model risk management or an equivalent independent function.

Model validation independently reviews the documentation and implementation produced by the first line. Validators assess conceptual soundness, test model behaviour, review limitations, and produce formal findings and recommendations.

Model governance ensures compliance with model risk policies and procedures throughout the model lifecycle. From initial identification and inventory inclusion to retirement. Governance activities include advising on policy compliance, granting conditional or temporary approvals, managing waivers, and overseeing remediation actions.

For example, a one-off approval may be granted for a trade outside a model’s standard scope, subject to documented conditions and compensating controls.

Effective validation relies on active dialogue and challenge between the first and second lines. Validators critically assess data choices, methodological decisions, assumptions, limitations, and use-case alignment.

Independence of the second line remains essential. This includes separate reporting lines, sufficient expertise, and explicit authority to challenge or block model deployment when necessary.

Once models are in production, periodic review and revalidation take place at a frequency commensurate with model tier, complexity, and risk profile. For ML models, this often includes more frequent monitoring and review cycles.

Model Validation Framework

In line with SR 11-7, model validation continues to rest on three core elements, which have been expanded in practice since 2021.

Conceptual soundness: The model should appropriately capture the key features of the product or risk it represents. For example, an equity options model should be able to reflect implied volatility skew.

Ongoing monitoring: Validators assess whether known limitations are breached, review monitoring metrics, and evaluate changes in data, code, or usage. For ML models, this includes monitoring for data drift, model drift, and explainability stability.

Outcome analysis: Validators perform sensitivity analysis, benchmarking, and stability testing to assess how outputs respond to changes in inputs.

These elements are increasingly complemented by reviews of data lineage, third-party dependencies, model interconnections, and operational resilience.

Automation through platforms such as Yields supports consistent execution of these activities, reduces manual effort, and improves traceability.

Third Line of Defence

Internal audit represents the third line. Its role is to provide independent assurance that the interaction between the first and second lines is effective and that model risk policies and procedures are followed.

Audits typically focus on higher-tier models and those used for regulatory purposes (e.g. stress testing, capital, or accounting models). Increasingly, audits also cover AI governance, automated controls, and end-to-end model ecosystems.

Identifying deficiencies is often time-constrained. One challenge is detecting atypical or weak validation practices across large model portfolios.

How can machine learning improve the third line of defence efficiency?

Machine learning techniques can support audit activities by analysing repositories of validation reports and control evidence. Feature extraction and outlier detection can help identify reports that deviate materially from expected standards, such as missing sections or unusual structure.

Conclusion

The Three Lines of defence Model provides a robust governance structure for managing the growing reliance of financial institutions on models. Clear role definition, effective challenge, and coordinated use of technology help ensure that high-quality models are deployed and maintained.

While the core principles remain stable, effective implementation today requires addressing AI and ML risks, continuous monitoring, and integration of governance across the full model ecosystem.

Through centralized data, analytics, and reporting, Yields enables collaboration across the three lines of defence, supports reproducible documentation, and automates key model risk management activities.

About the

Author(s)

Maarten Baeten helps various banks and corporations manage model risk and AI governance. Maarten has extensive experience in model validation and specialises in the use and application of model risk analytics to create model standardisation and benchmarks.